Bericht des US-Justizausschusses legt offen:

Ein vorläufiger Bericht des US-Justizausschusses legt mit hunderten Belegen die Praktiken der Internet-Zensur durch die EU-Kommission offen. Über Jahre zwangen Kommissare die Plattformen demnach zu Maßnahmen gegen vorgebliche „Desinformation“, versuchten sogar, die US-Präsidentschaftswahlen zu beeinflussen. Daneben auch Wahlen von Irland bis Rumänien.

Am Dienstag hat der Justizausschuss des US-Repräsentantenhauses einen gut 150-seitigen „vorläufigen Mitarbeiterbericht“ (Interim Staff Report) veröffentlicht, in dem die über Jahre aufgebaute Zensur-Infrastruktur der EU einer eingehenden Analyse und Kritik unterzogen wird. Im Laufe des vergangenen Jahres warnten die Abgeordneten immer wieder vor den EU-Zensurgesetzen, die auch die freie Rede von US-Bürgern einschränke. Im Februar 2025 forderte der Ausschuss interne Dokumente der Tech-Unternehmen zwangsweise an. Nun glauben die US-Abgeordneten handfeste Beweise für diese Vorwürfe zu haben.

Tausende Big-Tech-Dokumente bestätigen aus Sicht der Abgeordneten einen Eindruck: Die EU habe „eine erfolgreiche, zehn Jahre andauernde Kampagne zur Erlangung der globalen Kontrolle über die Online-Narrative geführt“. Der Weg zur Kontrolle führt dabei über die „Community-Regeln“ der Plattformen, die „die Grenzen für das, was auf dem globalen Marktplatz diskutiert werden kann“, bestimmen. Diese Regeln galt es folglich zu beeinflussen, wozu die EU-Kommission im Laufe der Jahre verschiedene Codes erließ und Foren gründete.

Anfangs war die Teilnahme freiwillig, aber intern wussten die Tech-Unternehmen: „wir haben eigentlich keine Wahl“. Insgesamt dürfte es zu hunderten Treffen und ebenso vielen Zensuranfragen an jedes einzelne Tech-Unternehmen gekommen sein.

In den Foren tagte zuvörderst die permanente EU-Taskforce, die Big-Tech-Vertreter hatten als Teil dieser Taskforce zu funktionieren. Hinzu traten weitere Akteure aus Industrie, „Zivilgesellschaft“ und sogenannte „Faktenchecker“, wie es in einer der internen E-Mails heißt. Die Marschrichtung der Diskussion wurde dabei stets von der EU-Kommission vorgegeben. Die Entscheidungen wurden dann durch angeblichen „Konsens“ getroffen – in Wahrheit aber unter dem Druck der Kommission, die spätestens seit der Einführung des Digital Services Act (DSA) ab 2022 ein scharfes Schwert gegen die Konzerne besaß: Sechs Prozent des globalen Jahresumsatzes können seitdem als Strafe gegen Facebook, X und Co. verhängt werden, wenn die Plattformen nicht so spielen, wie die EU es sich wünscht. Zum ersten Mal kam dieses Instrument gegen X zum Einsatz, das sich den Vorgaben verweigert.

Dabei ging es im Laufe der Jahre um ganz verschiedene Themen, etwa um Massenmigration, Männer im Frauensport oder die Behandlung mittelschwerer Krankheiten. So wandte sich im Oktober 2020 die Vizepräsidentin der Kommission und Kommissarin für „Werte und Transparenz“, Věra Jourová, ganz informell an die Plattformen. Jourová schrieb eine E-Mail an Microsoft, Facebook, Twitter, Bytedance (die Mutterfirma von TikTok) und Google. Darin äußerte die Kommissarin eine „freundliche Bitte“ um Informationen zur „Intensität der Kampagne gegen Covid-19-Impfungen“. Außerdem fragte sie nach etwaigen Regeländerungen der Plattformen. All das schrieb Jourová natürlich „in Kenntnis der Präsidentin“ und ohne Zweifel in von der Leyens Auftrag. Es ging also darum, Kritik an der „Impfung“ möglichst früh aus dem Weg zu räumen und – damals noch auf freiwilliger Basis – Unterdrückungs- und Zensurmaßnahmen zu ergreifen.

Der US-Hebel zur Änderung

Das Problem aus US-Sicht ist nun, dass dieselben Regeln, Richtlinien und Maßnahmen auch für amerikanische Bürger gelten und so faktisch den Ersten Verfassungszusatz außer Kraft setzen. Und hier liegt offenbar der Hebel für einen Einfluss der USA auf die Verhandlungen mit den Plattformen, vielleicht sogar auf die EU-Regelungen selbst. Der Senator Eric Schmitt schrieb auf X: „Extrem linke Eurokraten wollen, dass Social-Media-Unternehmen die Online-Rede von Amerikanern zensieren. Wir haben europäische Kontrolle über unsere freie Rede 1776 zurückgewiesen. Wir werden sie nicht 2026 zulassen.“

Der emeritierte Gesundheitsökonom und derzeitige Leiter der National Institutes of Health (NIH), Jay Bhattacharya, beglückwünschte die Ausschussmitglieder zu ihrer Arbeit: „Ausländische Regierungen sollten kein Veto gegen das Recht auf freie Meinungsäußerung in den USA haben.“

Dieser Hebel wird stärker, indem die Abgeordneten auch auf Versuche der Wahlbeeinflussung hinweisen – nicht nur in europäischen Staaten, sondern auch in den USA. So hätten „politische Amtsträger auf höchster Ebene der Europäischen Kommission“ TikTok dazu aufgerufen, US-amerikanische Inhalte vor den Präsidentschaftswahlen von 2024 „aggressiver zu zensieren“. Bekannt ist zudem, dass Thierry Breton vor dem Gespräch zwischen Elon Musk und Donald Trump auf X einen schrillen Warnbrieg verschickte, in dem er mit Vergeltungsmaßnahmen gemäß DSA drohte.

Parallel hatten andere Kommissionsmitglieder von den Plattformen gefordert, darzulegen, wie sie Beiträge zu den US-Wahlen „moderieren“ wollten. Wieder aktiv dabei: die damalige Vizepräsidentin für Werte und Transparenz, Věra Jourová, die solche Maßnahmen dezent als „Wahlvorbereitungen“ (election preparations) benannte, wofür sie sogar eigens nach Kalifornien reiste. Ein vermutlich beispielloses Ausgreifen der EU-Gewaltigen auf US-Territorium. Die Einmischung in die US-Wahl war offenbar mehr als nur ein Fehltritt des Irrläufers Breton.

Daneben gibt es aber Hinweise, dass auch größere Wahlgänge in europäischen und EU-Staaten zu Säuberungswellen in Online-Plattformen führten: „Richtlinien, Praktiken und Algorithmen“ wurden „aktualisiert und verfeinert“, Maßnahmen zur Reduktion von „Desinformation“ ergriffen, generative KI eingeschränkt, die vorgeblich „Desinformation“ bebildere. Nebenher wurden auch die links-dominierten „Faktenchecker“ losgeschickt, um Beiträge im Sinne von Regierungen zu „prüfen“. Auch gegen „gendered disinformation“ ging man vor, also Beiträge über Politikerinnen oder sexuelle Minderheiten.

Regelmäßig rief die Kommission zur Wahlbeeinflussung auf

Seit der DSA 2023 in Kraft getreten ist, habe die EU-Kommission demnach nationale Wahlen in acht Ländern beeinflusst, darunter die Slowakei, die Niederlande, Frankreich, Moldau (nicht EU-Mitglied), Rumänien und Irland, daneben natürlich auch die EU-Wahlen von 2024. So wurden etwa Aussagen wie „Es gibt nur zwei Geschlechter“ unterdrückt, die im Zeichen der Transgender-Ideologie politisch geworden waren. Daneben fanden die US-Abgeordneten auch Belege dafür, dass Vorwürfe der Wahlbeeinflussung – etwa durch Russland in Rumänien – erfunden waren: TikTok fand jedenfalls keine Beweise für das Gegenteil in seinem reichen Datenschatz. Das Vorgehen der EU gegen die rumänischen Wahlen 2024 gehörte bekanntlich zum Aggressivsten, das man von EU-Kommission gesehen hat.

Im Oktober 2023 wollte die Kommission in Den Haag die „Risikoeinschätzung und Abhilfemaßnahmen“ von TikTok angesichts der niederländischen Wahlen diskutieren. 2025 fand ein ähnliches Treffen statt. Sechs Wochen vor den Wahlen wurden Vertreter von Alphabet (Google), Meta (Facebook, Instagram), Microsoft, TikTok, X sowie Zensur-NGOs zu einem „Runden Tisch zu Wahlen im Kontext des Digital Services Act“ eingeladen. Wieder ging es um angeblich „schädliche Beiträge“, deren Reichweite eingeschränkt werden sollte, keineswegs um rechtswidrige. 2024 gab es dasselbe Verfahren in Frankreich und Moldau, 2024 und 2025 auch in Irland. Google betonte hier, wie es KI-Werkzeuge nutze, um „Falschinformationen“ herauszufiltern, Microsoft (Anbieter von LinkedIn und der Suchmaschine Bing) berichtete, dass man „Fehlinformationen“ beseitige und niederstufe (downranking). Auch Facebook/Meta bekannte sich zu neuen Einschränkungen der freien Rede, die man aber nicht spezifizierte. Ich bin damit einverstanden, dass mir Inhalte von Twitter angezeigt werden.

Die konkreten Beispiele für auf EU-Wunsch geänderte Leitlinien fehlen dabei nicht. Die chinesische Plattform TikTok änderte im Jahre 2023 ihre Leitlinien ausdrücklich, um „die Einhaltung des Digital Services Act sicherzustellen“. In den reformierten Leitlinien geht es etwa darum, dass bestimmte Arten von Inhalten nicht in Feeds gelangen sollen. Ihnen wird die „For You Feed (FYF) Eligibility“ entzogen. Sie sind also noch auffindbar, etwa auf dem Konto des Erstellers oder durch eine spezielle Suche, sie werden aber nicht von TikTok weiterempfohlen. Zu den so ausgegrenzten Inhalten gehören „mäßig schädliche Falschinformationen“ über die „Behandlung mäßig schwerer Krankheiten“ – gemeint scheint Corona oder das nächste beliebige „Pandemie“-Virus.

In Beiträgen dazu würden Dinge aus dem Zusammenhang gerissen, um die Nutzer über „Themen von öffentlicher Wichtigkeit“ irrezuführen. Außerdem würden wissenschaftliche Daten falsch dargestellt. Das kann natürlich immer passieren, aber ausdrücklich darauf hingewiesen wird eben nur bei diesem „Thema von öffentlicher Wichtigkeit“. Daneben sollen auch Beiträge mit „marginalisierender Sprache“, die „geschützte Gruppen“ herabwürdigen oder deren „ungleiche Behandlung“ normalisieren könnte, ausgeblendet werden. Aktuell heißt es in den TikTok-Eligibility-Standards: „In Krisen- oder Unruhezuständen können wir wiederholte Empfehlungen unterbrechen … damit Ihre Erfahrung sicher, abwechslungsreich und unterhaltsam bleibt.“

Erst wurden Corona-Kritiker demonetisiert, nun soll es Konservative treffen

Nachgezeichnet wird in dem Bericht des US-Justizausschusses die Entwicklung der Zensurmaßnahmen schon seit dem Jahr 2015. Im Jahr darauf wurden Plattformen wie Facebook, Instagram, TikTok und (damals noch) Twitter erstmals darauf eingeschworen, bestimmte Inhalte zu zensieren, nämlich das sogenannte „hasserfüllte Verhalten“ („hateful conduct“). 2018 wurde ein „Code of Practice on Disinformation“ beschlossen. Unter ihm wurden die Plattformen zur Zurückdrängung von „Desinformation“ angehalten – also bestimmter, teils wahrheitsgemäßer Informationen, die aber in gewissem Sinne als schädlich angesehen wurden. 2017 hatte Deutschland das Netzwerkdurchsetzungsgesetz erlassen und begann, wie der US-Report bemerkt, die „Durchsetzung von Zensurgesetzen auf nationaler Ebene“.

2022, als der DSA für große Online-Plattformen in Kraft trat, aktualisierte die Kommission ihren „Desinformations-Code“. Die bedeutenden Plattformen mussten nun wieder an den Treffen einer Taskforce teilnehmen, die sich regelmäßig traf, um die Zensurbemühungen der Plattformen zu diskutieren und neue Zensurwünsche zu äußern. Es gab sechs Untergruppen, die sich auf Themen wie „Faktenchecken“, Wahlen oder die „Demonetisierung konservativer Nachrichtenmedien“ konzentrierte. Das bedeutet: Konservativen Medien sollte die Möglichkeit entzogen werden, über Youtube, Facebook usw. für sich zu werben und so Geld zu verdienen. Schon 2021 hatte die Kommission dazu aufgerufen, Kritiker von Coronamaßnahmen und „Impfstoffen“ zu demonetisieren.

Im Hintergrund steht eine Erkenntnis der US-Repräsentanten: Die beteiligten Faktenchecker (wie NewsGuard oder der Global Disinformation Index) betrachten konservative Meinungsäußerungen regelmäßig als „Desinformation“, während links-progressive Äußerungen als „vertrauenerregend“ gelten. Später wurden auch Standpunkte zum Krieg in der Ukraine einem Netz von „wahr“ und „falsch“ unterworfen, das die US-Abgeordneten als „Pseudo-Wissenschaft“ bezeichnen. Auch in diesem Zusammenhang kommt immer wieder die Frage an die Plattformen: „Welche Maßnahmen haben Sie ergriffen, um Desinformation zur Krise zu reduzieren?“ Dabei konnte die EU lange auf die Zusammenarbeit der Biden-Harris-Regierung setzen. Ich bin damit einverstanden, dass mir Inhalte von Twitter angezeigt werden.

Allein von 2022 bis 2024 sollen mehr als 90 Treffen zwischen den Plattformen, Kommissionsvertretern und „zensurfreundlichen Organisationen der Zivilgesellschaft“ – im Original: „censorious civil society organizations (CSOs)“ – stattgefunden haben. Es ist klar, dass der Großteil der Plattformen den Forderungen der Kommission nach der Unterdrückung bestimmter Informationen, die als „schädlich“ angesehen wurden, nachkam.

2023 verließ Elon Musk mit seiner Plattform Twitter (später X) das EU-Zensur-Forum, das die Durchsetzung des DSA sicherstellen sollte. EU-Kommissar Thierry Breton drohte Konsequenzen an: „Die Verpflichtungen bleiben bestehen … Unsere Teams werden bereit sein, sie durchzusetzen.“

Handbuch über einen fragwürdigen Graubereich

Schon 2015 war das EU-Internetforum (EUIF) gegründet worden. Seine Aufgabe ist es laut Kommission, „Justiz- und Innenminister, Europol, Online-Plattformen, internationale Partner und Forscher“ zusammenzubringen, um „die gemeinsame Verantwortung für die Gewährleistung der Online-Sicherheit zu erörtern“. Der US-Justizausschuss übersetzt das als Vorgehen gegen „rechtmäßige, nicht gegen Gesetze verstoßende Äußerungen“, die sich etwa 2023 in einem „Handbuch“ des Internetforums niederschlug. Darin werden die folgenden Elemente als Zielobjekt der Zensoren benannt:

- „populistische Rhetorik“

- „regierungsfeindliche/EU-feindliche“ Inhalte,

- „elitenfeindliche“ Inhalte,

- „politische Satire“,

- „migrantenfeindliche und islamfeindliche Inhalte“,

- „flüchtlings-/einwandererfeindliche Inhalte“,

- „LGBTIQ…-feindliche Inhalte“ und

- „Meme-Subkultur“

Gemeint ist das EU-Handbuch „Borderline Content. Understanding the Gray Zone“, in dem die Beispiele aus der Liste als „borderline violative“, also „grenzwertig regelwidrig“, bezeichnet werden (Seiten 3 f.). Das Handbuch postuliert damit die Existenz eines „Graubereichs“ zwischen eindeutig legalen und „regelkonformen“ Äußerungen im Internet und dem, was die Eurokraten in verräterischer Abkürzeritis meist nur als TVEC bezeichnen: das ist „Terrorist & Violent Extremist Content“, „terroristische und gewaltsame extremistische Inhalte“, die hier als Nordstern dienen. Um sie geht es den Eurokraten aber nicht in ihrem „Borderline“-Handbuch, sondern um jene Äußerungen, die vollkommen legal sind, aber als schädlich eingeordnet werden.

Mit Verweis auf „Akademiker und Forscher“ wird behauptet, dass Inhalte, die „normalerweise, in einem demokratischen Umfeld von Parametern der freien Meinungsäußerung geschützt“ werden, in öffentlichen Foren unangemessen und folglich „borderline illegal“ seien. Eine andere schmissige Formulierung lautet: „lawful but awful“. Erkennbar wird so aber höchstens, dass es den genannten „Akademikern und Forschern“ an Durchblick und an Theoriebildung mangelt. Ein Verhalten, das offline legal und geschützt ist, muss dies auch im Online-Raum sein.

DSA: Ein notwendiges Gesetz für die EU-Großen

Gemeint ist also wirklich eine Art verpflichtende, mit Zwang gegen die Plattformen durchgesetzte Online-Etikette, in der geltendes Recht und Grundrechte ausgesetzt werden, weil gewisse Verhaltensformen „unangemessen“ seien. Und über die Angemessenheit oder

nicht entscheiden freilich die EU-Mächtigen, denen mit dem DSA endlich ein wirksames Zwangsmittel gegen die Plattformen zur Verfügung steht. Das ist die Erklärung für die innere ‚Notwendigkeit‘ dieses EU-Gesetzes gemäß EU-Logik.

Die vom US-Justizausschuss ausgewählten Beispiele reichen aus, um die Absurdität des Vorhabens aufzuzeigen, das nichtsdestotrotz bereits umgesetzt wird: Bestimmte, angeblich populistische Äußerungsformen, Regierungskritik, EU-Kritik, „Elitenkritik“, politische Satire, Migrationskritik, Kritik an der LGBT-Dogmatik und „Meme-Subkultur“ sind schon lange in verschiedenen Ländern zum Zielobjekt von Gesinnungspolizeien geworden, die teils staatlich organisiert sind und sich teils auf „Zivilgesellschaftsorganisationen“ – wie die berüchtigte „Hate Aid“ – stützen.

Der Raum des Illegalen wird ausgedehnt – über das Maß hinaus, das die Gesetze, Rechte und Freiheiten in den EU-Mitgliedsstaaten vorgeben. An sich ist das ein Skandal erster Güte, den der US-Justizausschuss hier benennt, der aber in der Öffentlichkeit der europäischen Gesellschaften noch kaum angekommen ist.

Nachtrag 1 =

Zensur, Überwachung und Wahlmanipulationen: Die EU mischt sich immer eindringlicher in die Lebensbereiche ihrer Bürger ein. Was einst als Wirtschafts- und Friedensprojekt begann, ist längst zu einem faschistoiden Unterdrückungsapparat mutiert.

Der vom Justizausschuss des US-Repräsentantenhauses vorgelegte zweite Teil seines Untersuchungsberichts über die Frage, „inwieweit ausländische Gesetze, Vorschriften und gerichtliche Anordnungen Unternehmen dazu zwingen, nötigen oder beeinflussen, Äußerungen in den Vereinigten Staaten zu zensieren“, hat alle Anschuldigungen bestätigt, die die Trump-Administration seit einem Jahr vor allem gegen die übergriffige und immer freiheits- und grundrechtsfeindlicher agierende Europäische Union erhebt. Das Fazit der Untersuchung lautet, dass die EU „in einer umfassenden, zehnjährigen Initiative erfolgreich Druck auf Social-Media-Plattformen ausgeübt, ihre globalen Regeln zur Moderation von Inhalten zu ändern, wodurch sie direkt in die Online-Meinungsäußerung der Amerikaner in den Vereinigten Staaten eingegriffen hat“. Obwohl dies oft als Bekämpfung sogenannter „Hassrede” oder „Desinformation” dargestellt werde, habe die Europäische Kommission daran gearbeitet, „wahre Informationen und politische Äußerungen zu einigen der wichtigsten politischen Debatten der jüngeren Geschichte zu zensieren – darunter die COVID-19-Pandemie, Massenmigration und Transgender-Themen“.

Binnen zehn Jahren habe die EU inzwischen ein bedrohliches Maß an Kontrolle über die globale Online-Meinungsäußerung erlangt, die nunmehr ausreicht „um Narrative, die die Macht der Europäischen Kommission bedrohen, umfassend zu unterdrücken“, wird weiter festgehalten. Der Digital Services Act (DSA) der EU markiere dabei „den Höhepunkt jahrzehntelanger Bemühungen Europas, politische Opposition zum Schweigen zu bringen und Online-Narrative zu unterdrücken, die das politische Establishment kritisieren“, stellt der Bericht klar. Das seit rund 30 Jahren als Massenphänomen verbreitete Internet und die seit 20 Jahren wachsenden sozialen Medien hätten eigentlich zunächst verheißen, zu einer Kraft zu werden, die die Meinungsfreiheit und damit auch die politische Macht demokratisieren würde.

Immer mehr Druck auf Plattformen ausgeübt

Diese Entwicklung habe jedoch in zunehmendem Maße die etablierte politische Ordnung eines seither überall im Westen zunehmend an die Machthebel gelangten und die Institutionen durchsetzenden linken Kartells bedroht (vor allem in Deutschland lässt sich dies bemerken); ab Mitte der 2010er Jahre hätten die politischen Eliten zunächst in den USA und dann in Europa versucht, „den aufkommenden populistischen Bewegungen entgegenzuwirken, die zutiefst unpopuläre Politiken wie die Massenmigration in Frage stellten“.

In der Erkenntnis, dass die Bewältigung dieses “Problems” mehrere Jahre dauern würde, habe die Europäische Kommission ab 2015 mit der Einrichtung verschiedener Foren begonnen, in denen europäische Regulierungsbehörden direkt mit Technologieplattformen zusammentreffen konnten, um zu diskutieren, wie und welche Inhalte “moderiert” – im Sinne von reguliert und zensiert – werden sollten. Obwohl dies vorgeblich zur Bekämpfung von „Fehlinformationen” und „Hassreden” gedacht gewesen sei, hätten nichtöffentliche Dokumente, die dem Ausschuss vorgelegt worden seien, gezeigt, dass die Europäische Kommission in den letzten zehn Jahren direkt Druck auf Plattformen ausgeübt habe, um rechtmäßige politische Äußerungen in der Europäischen Union und im Ausland zu zensieren. Das 2015 von der Generaldirektion Migration und Inneres (“GD Home”) der Europäischen Kommission gegründete EU-Internetforum (EUIF) habe dann 2023 estmals das EUIF veröffentlicht, ein Handbuch für Technologieunternehmen zur “Moderation” rechtmäßiger, nicht gegen Vorschriften verstoßender Äußerungen.

Manipulierte Europawahlen

Die Enthüllungen des Berichts, auf den hierzulande von Regierungsmedien inhaltlich bezeichnenderweise fast gar nicht eingegangen, sondern der wieder einmal als absurde US-Einmischung und trumpistische Verleumdung der “hochmoralischen” EU gerahmt wird, lassen aus Sicht von manchen Dissidenten und Juristen nur die Schlussfolgerung zu, dass die EU inzwischen teilweise als eine kriminelle Verschwörung gegen Freiheit und Grundrechte eingestuft werden kann. Man muss auch hier der Trump-Regierung und den USA dankbar sein, dass sie Europa bei diesen diesseits des Atlantiks systematisch verschweigenden, verleugneten und als “rechte Verschwörungstheorien” bekämpften Tatsachendarstellungen den Spiegel vorhält und die Augen öffnet. Doch der Justizausschuss des US-Repräsentantenhauses hat in ihrem Bericht noch weitere politische Anmaßungen und schamlose Manipulationen der Brüsseler Eurokratie und der politischen Eliten angeprangert: Er dokumentiert, dass die EU in den letzten Jahren nicht weniger als acht (!) Europawahlen manipuliert hat. Dies betrifft folgende Mitgliedsstaaten:

- Slowakei (2023)

- Niederlande (2023 und 2025)

- Frankreich (2024)

- Rumänien (2024)

- Moldawien (2024)

- Irland (2024 und 2025.

Unreformierbarer Moloch EU

“Das sind die Leute, die 24/7 von ‚unsere Demokratie‘, von ‚Freiheit‘ und ‚liberalen Werten‘ quatschen”, kommentiert Tatjana Festerling. Sie stellt weiter fest: “Jetzt, wo die Weltöffentlichkeit sieht, dass sich Europa unter der Knute einer von Macht besessenen Bande in Brüssel in ein runtergerocktes, verarmendes, islamisiertes, totalitäres Shithole mit täglicher Gewalt auf den Straßen verwandelt hat, bleibt zumindest zu hoffen, dass sie die anstehenden Wahlen in Ungarn und Bulgarien nicht mehr ganz so offensichtlich beeinflussen können. Wer will schon Partner und Investor einer EU sein, in der sich das Böse an die Macht geputscht hat und diese durch willkürliche Regeln und Gesetze unberechenbar absichern wird?”

Tatsache ist: diese EU ist nicht mehr reformierter. Ein einiges und partnerschaftliches Europa der Vaterländer, wie es ursprünglich angedacht war, ist das genaue Gegenteil des Molochs, der hier zur Durchsetzung einer agendagetriebenen, supranationalen Interessenpolitik errichtet wurde. Immerhin: Wenn durch die US-Enthüllungen – wohlgemerkt vom dortigen Parlament, nicht vom “bösen Trump” zusammengetragen und fundiert untermauert – weitere Austrittsbestrebungen (“Exits”) Auftrieb erhalten sollten, wäre das wünschenswert. Diese EU ist nicht reformierter; sie muss zerschlagen werden – damit die europäische Idee noch einmal neu Gestalt annehmen kann. Diesmal dann aber als an den Menschen, den Bürgern orientiertes Projekt, nicht als Spielball degenerierter Eliten.

Nachtrag 2 vom 05/02/26 =

Wolfgang Wiehle

+++ EU greift Meinungsfreiheit noch tiefer an: US-Bericht offenbart schockierende Wahrheit! +++

Ein brisanter Bericht des Justizausschusses im US-Repräsentantenhaus erschüttert das demokratische Selbstverständnis Europas. Unter dem Titel „The EU Censorship Files“ wirft das Gremium der Europäischen Kommission sowie einzelnen Regierungen – darunter auch der deutschen – eine gezielte Manipulation der öffentlichen Meinung über digitale Plattformen vor. Demnach sollen über einen Zeitraum von zehn Jahren hinweg Konzerne wie TikTok, Meta oder X (vormals Twitter) unter Druck gesetzt worden sein, Inhalte zu löschen oder algorithmisch zu unterdrücken – nicht etwa wegen gesetzeswidriger Inhalte, sondern weil diese konservative Positionen vertreten. Selbst wahre Aussagen wie „Es gibt zwei Geschlechter“ wurden als zu sperrende Inhalte klassifiziert. Der Irrsinn kennt keine Grenzen mehr. Elon Musk bezeichnete die Enthüllungen als „Wow“-Moment und trifft damit den Kern der Empörung.

Laut Report geschah dies nicht nur im europäischen Kontext. Vielmehr reichte der Einfluss europäischer Zensurgesetze bis tief in die Vereinigten Staaten. Plattformen zensierten Informationen, die durch den Ersten Verfassungszusatz der USA eigentlich geschützt wären, aus Angst vor Repressionen seitens der EU. Damit, so die US-Kritik, wird die Meinungsfreiheit nicht nur auf unserem Kontinent untergraben, sondern die EU exportiert ein repressives Modell in andere Demokratien. Die Amerikaner warnen nun: Wer solche Praktiken duldet, stellt sich außerhalb freiheitlich-demokratischer Grundwerte. Besonders brisant: Laut Bericht traf sich die Kommission vor acht Wahlen in sechs europäischen Ländern gezielt mit Plattformbetreibern, um die Verbreitung politischer Aussagen unmittelbar vor Wahlterminen zu unterdrücken – ein ungeheuerlicher direkter Angriff auf das demokratische Prinzip der freien Meinungsbildung.

Jetzt geht es also auch noch um das Eingreifen in die Wahlkämpfe und damit den (neuen) Gipfel der Zensur – der (machen wir uns nichts vor!) auch die deutsche Opposition zu treffen droht, wenn sie vor einem Wahlsieg steht. Der Justizausschuss des US-Repräsentantenhauses bestätigt, dass die EU in folgende europäische Wahlen eingegriffen hat: Slowakei (2023), Niederlande (2023 und 2025), Frankreich (2024), Rumänien (2024) Moldawien (2024), Irland (2024 und 2025). Wo kommen wir hin, wenn die Zensurgelüste Brüssels völlig über die Stränge schlagen? Dabei trifft diese Entwicklung auf fruchtbaren Boden, denn in Deutschland herrschen sowieso schon eingeschränkte Debattenräume, Cancel Culture und die Diffamierung abweichender Meinungen.

Für die AfD ist klar: Eine solche Form von Meinungskontrolle und die Einmischung in Wahlkämpfe bis zum Manipulieren ganzer Wahlen ist mit einem freien demokratischen Rechtsstaat nicht vereinbar. Alle Kommunikationsprotokolle zwischen Bundesregierung, EU und Tech-Konzernen müssen nicht nur offengelegt werden, sondern es müssen auch gesetzliche Garantien zur Sicherung echter Meinungsfreiheit geschaffen werden. Die AfD wird das Recht auf freie Rede mit aller Entschiedenheit verteidigen – gegen Brüssel, gegen Berlin, und vor allem gegen jene, die glauben, ihre politische Ideologie über den Willen des Volkes stellen zu dürfen. Wo Meinung nicht mehr frei ist, ist auch Demokratie nur noch Fassade. Das muss sich ändern und dafür steht die AfD!

Nachtrag 3 vom 07/02/26 =

https://reitschuster.de/post/usa-schlagen-alarm-jahrzehnt-der-zensur-in-europa

USA schlagen Alarm: „Jahrzehnt der Zensur in Europa“ Bericht aus Washington mit gesellschaftlichem Sprengstoff

Von Kai Rebmann

Die Vorwürfe sind nicht ganz neu, doch selten zuvor wurden sie derart massiv geäußert wie jetzt – und dabei so gut durch Fakten unterlegt. Seit mindestens zehn Jahren soll die EU eine Kampagne zur systematischen Unterbindung von Meinungsfreiheit und Demokratie in Europa fahren. Der Instrumentenkasten reicht dabei von der Ausblendung simpler biologischer Fakten über Zensur sozialer Medien bis hin zur Einmischung in Wahlen in mindestens sechs Ländern, unter anderem in für den Fortbestand der EU existenziell wichtigen Staaten.

Zunächst sei „erfolgreich Druck auf Social-Media-Plattformen ausgeübt“ worden, „um wahre Informationen in den Vereinigten Staaten zu zensieren.“ Dann habe es „gezielte Zensur von politischen Inhalten in den USA“ gegeben. Und schließlich sei sich „in Wahlen in ganz Europa eingemischt“ worden.

Diese Aussagen bilden das Fundament eines aktuellen Reports des Justizausschusses im US-Repräsentantenhaus, der innerhalb des Weißen Hauses aber offenbar schon länger kursiert. So ist dann wohl auch die Brandrede von US-Vizepräsident J.D. Vance aus dem vergangenen Jahr zu erklären, in der dieser die Zustände in Europa – und insbesondere auch Deutschland – in Bezug auf Meinungsfreiheit und Demokratie auf das Schärfste kritisierte.

Wahlen in Europa unter Beobachtung

Und auch im jetzt veröffentlichten Bericht spielen die Bundesregierung bzw. deren Vorgängerinnen eine sehr unrühmliche Rolle. Berlin habe im „Jahrzehnt der europäischen Zensur“ eine tragende Rolle gespielt. Und dies beschränke sich nicht „nur“ auf die Zensur der freien Meinung und das Ausüben politischen Drucks auf Social-Media-Plattformen, sondern ausdrücklich auch auf die Manipulation von acht Wahlen in sechs europäischen Ländern, die größtenteils auch EU-Mitglieder sind.

Konkret genannt werden Irland (Wahlen 2024 und 2025), die Niederlande (2023 und 2025), Frankreich, die Slowakei, Rumänien und Moldau. Ja, richtig, es handelt sich dabei um eben solche Länder, in denen die vermeintlich „falschen“ Kandidaten entweder gewonnen haben oder denen vor den entsprechenden Wahlen zumindest gute Chancen eingeräumt wurden.

Besonders deutlich wurde das am Beispiel Rumänien. Nach dem ersten Urnengang am 24. November 2024 zur Präsidentschaftswahl lag dort der parteilose und als prorussisch geltende Kandidat Călin Georgescu in Führung. Nicht zuletzt massiver Druck aus Brüssel veranlasste den Verfassungsgerichtshof am 6. Dezember 2024 dazu, diesen Wahlgang zu annullieren – nachdem dieselbe Instanz diesen am 28. November 2024 noch für rechtmäßig erklärt hatte. Die Wahl sei aus Moskau beeinflusst worden, zudem sei es auf Tiktok und in anderen sozialen Medien zu einer Bevorteilung Georgescus gekommen, so die bis heute durch nichts belegten Vorwürfe. Am 26. Februar 2025 wurde Georgescu zunächst verhaftet, zwei Wochen später folgte dann der endgültige Ausschluss des aussichtsreichen Kandidaten von der Wahlwiederholung.

Und wie Wahlen manipuliert, verfälscht oder wie in Rumänien notfalls eben auch „rückgängig“ gemacht werden, das weiß die politische Elite in kaum einem europäischen Land besser als in Deutschland – sei es nun Thomas Kemmerich, der nach allen demokratischen Gepflogenheiten ins Amt gewählte und kurz darauf aus eben diesem wieder herausgejagte Ex-Ministerpräsident Thüringens, oder AfD-Kandidaten, die aus fadenscheinigen Gründen gar nicht erst zur Wahl zugelassen wurden und werden. Solche Vorgänge wären in Deutschland – und den meisten anderen Ländern Europas, mindestens aber der EU – bis vor wenigen Jahren noch undenkbar gewesen. Inzwischen sind sie eher die traurige Regel als die Ausnahme.

Das eigentlich Gefährliche daran ist aber etwas anderes: Es regt sich kaum noch jemand über solch durchsichtige Praktiken auf, am allerwenigsten die Medien! Ganz im Gegenteil ist es ausgerechnet die sogenannte „vierte Gewalt“, die dem Establishment regelmäßig als Steigbügelhalter dient. Verunglimpft und an den Pranger gestellt werden nicht diejenigen, die den Schmutz verursachen, sondern diejenigen, die auf den Schmutz hinweisen.

EU droht Social Media mit Millionen-Strafen und Verboten

Der lange Arm der Zensurmaschine in Brüssel reicht unterdessen weit über die Politik und Europa hinaus und macht selbst vor den USA nicht Halt. Der Bericht des Justizausschusses hält zum Beispiel fest: „Aufgrund der europäischen Zensurgesetze zensiert Tiktok in den Vereinigten Staaten wahre Informationen.“ Dieser Satz bezieht sich konkret auf die vermeintlich „rechte These“ der Zweigeschlechtlichkeit. Was bis vor nicht allzu langer Zeit noch als schiere Selbstverständlichkeit galt und sprichwörtlich jedes Kind wusste, ist heute zu einer „These“ geworden. Zu etwas also, über das sich – natürlich ergebnisoffen – debattieren ließe.

Klar ist, dass die spätestens Mitte der 2010er-Jahre gestartete Zensurkampagne der EU durch die Einführung des Digital Services Acts noch einmal richtig an Fahrt aufgenommen hat. Ein erster Höhepunkt wurde freilich schon davor erreicht, namentlich zu Beginn der Corona-Krise. Jeder Zweifel an den offiziellen Versionen zum Ursprung des Virus und/oder dem vermeintlichen Nutzen des sogenannten Impfstoffes wurden als Verschwörungstheorie gebrandmarkt. In der Folge sahen sich die Betreiber von sozialen Medien mit massiven Zensurzwängen konfrontiert, die nicht zuletzt aus Brüssel kamen.

Aber auch knapp sechs Jahre danach wird die Meinungsfreiheit im Netz kleingehalten. So berichtet der Justizausschuss des US-Repräsentantenhauses ganz aktuell über eine „geheime Entscheidung“ der EU-Kommission, wonach „X wegen der Verteidigung der Meinungsfreiheit mit einer Geldstrafe von 140 Millionen Euro belegt und mit einem Verbot von X in der EU gedroht“ worden sei.

Es waren und sind genau solche Maßnahmen, derer sich die EU zunächst bedient, um danach in offiziellen Versionen von „Freiwilligkeit“ und einem „Konsens“ zu schwadronieren, auf denen entsprechende Vereinbarungen mit den Social-Media-Kampagnen über die Pflicht zur Löschung bestimmter Beiträge beruhe. Der Bericht aus den USA bezeichnet dies als „wichtigen Druckpunkt, um Inhalte in großem Umfang zu zensieren.“

Bleibt noch die Frage, was die USA interessiert, wie es um Meinungsfreiheit und Demokratie in Europa bestellt ist. Die Antworten finden sich im Report des Justizausschusses und lesen sich beispielsweise so: „Wenn Regierungen Druck auf (Social-Media-)Plattformen ausüben, ihre Community-Richtlinien zu ändern, dann ändern sie damit das, was Amerikaner in den Vereinigten Staaten und anderswo posten dürfen.“ Oder: „Seit mehr als einem Jahr warnt der Ausschuss davor, dass europäische Zensurgesetze die freie Meinungsäußerung in den USA im Internet bedrohen. […] Die großen Tech-Konzerne zensieren die Meinungsfreiheit von Amerikanern in den USA, einschließlich wahrer Informationen, um dem weitreichenden europäischen Gesetz zu digitalen Diensten nachzukommen.“

Nachtrag 3 vom 10/02/26 =

Enthüllungen aus den USA:

Wie die Europäische Union die Impfpropaganda steuerte und manipulierte

Der zweite Teil des Berichts des Justizausschusses des US-Repräsentantenhauses enthüllt in schockierendem Ausmaß, wie die Europäische Kommission während der Covid-19-Pandemie die öffentliche Debatte beeinflusste, Druck auf amerikanische Technologieunternehmen ausübte und systematisch unbequeme Meinungen zur Impfung unterdrückte.

koordinierte Zensur, Druck hinter den Kulissen sowie den Versuch, Kritiker zum Schweigen zu bringen – lange bevor Impfstoffe überhaupt verfügbar waren.

Eine Untersuchung, die das wahre Ausmaß des Problems offenlegte

Der zweite Teil des Berichts des Justizausschusses des US-Repräsentantenhauses konzentrierte sich darauf, „in welchem Ausmaß ausländische Gesetze, Vorschriften und Gerichtsentscheidungen Unternehmen dazu zwingen oder beeinflussen, Äußerungen in den Vereinigten Staaten zu zensieren“. Die Ergebnisse dieser Untersuchung zeigen nach Angaben der Autoren eindeutig, dass die Europäische Kommission ein umfangreiches Zensursystem betrieb, das sich nicht nur auf europäische Bürger beschränkte, sondern auch in das Funktionieren amerikanischer Unternehmen eingriff.

Der Bericht beschreibt eine Situation, in der Technologieunternehmen unter Druck gesetzt wurden, sich strengen und repressiven EU-Vorschriften zu unterwerfen. Andernfalls drohten ihnen „drakonische Geldstrafen“. Auf diese Weise gelang es der Europäischen Kommission, ihre Forderungen auch außerhalb des Territoriums der Union durchzusetzen.

Gezielte Zensur von Impfkritikern

Die vom Ausschuss geprüften Dokumente zeigen, dass die Europäische Kommission während der Covid-19-Pandemie als zentrales Organ für Zensur und Kontrolle fungierte. Ihr Ziel war es, eine einzige „richtige“ Auslegung von Impfungen und weiteren Maßnahmen durchzusetzen und sicherzustellen, dass nur genehmigte Narrative in den öffentlichen Raum gelangten.

Alles, was von dieser offiziellen Linie abwich – also andere Meinungen, Warnungen von Fachleuten oder kritische Fragen –, sollte den Erkenntnissen zufolge systematisch unterdrückt werden. Es ging also nicht nur um den Kampf gegen Unwahrheiten, sondern um den Versuch, jede abweichende Debatte zu eliminieren.

Geheime Verhandlungen mit amerikanischen Technologieunternehmen

Der Bericht beschreibt auch Gespräche hinter den Kulissen und geheime Korrespondenz zwischen Vertretern der Europäischen Kommission und amerikanischen Technologieriesen. Ein typisches Beispiel ist die Kommunikation vom 30. Oktober 2020, als Brüssel Informationen darüber verlangte, wie die Unternehmen gegen „Desinformationen“ über Covid-Impfstoffe vorgehen wollten – zu einem Zeitpunkt, als diese Impfstoffe noch nicht einmal auf dem Markt waren.

Ziel war es festzustellen, „wo wir derzeit in Bezug auf die Intensität der Kampagne gegen die Covid-Impfung stehen“ und wie sich die Situation weiterentwickeln könnte. Auf Grundlage dieser Informationen sollte eine „proaktive“ Kommunikationsstrategie vorbereitet und zugleich Unterstützung für die EU-Mitgliedstaaten bereitgestellt werden.

Koordination von oben und die Rolle der Führung der Europäischen Kommission

Zu den Forderungen gehörte auch die Mitteilung des aktuellen Stands der Regeln für Nutzer der Plattformen sowie der Art und Weise der Moderation von Diskussionen. Aus Brüssel kamen dabei Zusicherungen, dass diese Informationen ausschließlich zur Vorbereitung „geeigneter Maßnahmen“ verwendet und nicht mit der „breiten Öffentlichkeit“ geteilt würden.

Aufgrund der angeblichen „Dringlichkeit“ der gesamten Angelegenheit wurde die Kommunikation über gewöhnliche E-Mails geführt. Die Europäische Kommission betonte zugleich, dass sie die volle Unterstützung der damaligen Vizepräsidentin der Kommission, Věra Jourová, habe, die mit Wissen der Kommissionspräsidentin Ursula von der Leyen handelte.

Nach Ansicht der Autoren des Berichts zeigt gerade dieses Dokument klar, wie die Europäische Kommission den gesamten Covid-Diskurs steuerte und die öffentliche Debatte systematisch in die gewünschte Richtung lenkte.

Zum Schweigen gebrachte kritische Stimmen und Druck auf Plattformen

Jeder, der sich nicht an das offiziell genehmigte Narrativ hielt, sollte zum Schweigen gebracht werden. Online-Plattformen spielten in diesem Prozess die Rolle williger Helfer, indem sie ihre Nutzungsbedingungen anpassten und aktiv kritische Beiträge und Konten entfernten.

Der Bericht erinnert zugleich daran, dass die Versuche, Technologieunternehmen zur Zusammenarbeit zu zwingen, nicht erst mit der Pandemie begannen. Bereits lange vor Covid versuchte die Europäische Kommission durch informelle Absprachen und Druck, Plattformen dazu zu bewegen, ihre Anforderungen zu erfüllen.

„Freiwillige“ Verpflichtungen als hartes weiches Recht

Durch dieses Vorgehen ersparte sich die Europäische Kommission die Notwendigkeit, offizielle Gesetze zu verabschieden. Stattdessen setzte sie angeblich „freiwillige Selbstverpflichtungen“ der Technologieunternehmen durch. Diese ermöglichten in der Praxis jedoch wesentlich härtere Eingriffe in die Meinungsfreiheit, als dies über den Weg der normalen Gesetzgebung möglich gewesen wäre.

Laut dem Bericht handelte es sich in dieser Phase um eine Art „hartes Soft-Law“. In den letzten Jahren hat die EU jedoch selbst diese Zurückhaltung aufgegeben und mit Instrumenten wie dem Digital Services Act ein beispielloses Kontrollsystem geschaffen, das nach Ansicht von Kritikern eine ernste Bedrohung für die Meinungsfreiheit darstellt.

Warnung vor der Macht einer nicht gewählten Institution

Der amerikanische Bericht beschreibt den gesamten Prozess als einen „unglaublichen Staatsstreich von oben“. Eine nicht gewählte Institution, die nach Ansicht der Autoren niemand ausdrücklich gewollt habe, habe begonnen, wie eine monströse Behörde mit nahezu diktatorischen Befugnissen zu agieren, die willkürlich und ohne echte Kontrolle handelt.

Da die Mitglieder dieser Struktur aus derselben politischen Schicht stammen, die nach Ansicht der Kritiker die EU-Mitgliedstaaten in tiefe Probleme geführt hat, sei aus diesen Ländern kein Widerstand zu erwarten. Im Gegenteil: Der Bericht spricht von einer „eifrigen Unterstützung“ weiterer Einschränkungen der Rechte und der Selbstbestimmung der eigenen Bürger.

THE FOREIGN CENSORSHIP THREAT, PART II:

EUROPE’S DECADE-LONG CAMPAIGN TO CENSOR THE GLOBAL INTERNET

AND HOW IT HARMS AMERICAN SPEECH IN THE UNITED STATES

Interim Staff Report of the

Committee on the Judiciary

of the

U.S. House of Representatives

February 3, 2026

1

EXECUTIVE S UMMARY

The Committee on the Judiciary of the U.S. House of Representatives is investigating

how and to what extent foreign laws, regulations, and judicial orders compel, coerce, or

influence companies to censor speech in the United States.1 As part of this oversight, the

Committee has issued document subpoenas to ten technology companies, requiring them to

produce communications with foreign governments, including the European Commission and

European Union (EU) Member States, regarding content moderation.2 In July 2025, the

Committee published a report detailing how the European Commission—the executive arm of

the EU—weaponizes the Digital Services Act (DSA), a law regulating online speech, to impose

global online censorship requirements on political speech, humor, and satire.3 Since then,

pursuant to subpoena, technology companies have produced to the Committee thousands of

internal documents and communications with the European Commission. These documents show

the extent—and success—of the European Commission’s global censorship campaign.

The European Commission, in a comprehensive decade-long effort, has successfully

pressured social media platforms to change their global content moderation rules, thereby

directly infringing on Americans’ online speech in the United States. Though often framed as

combating so-called “hate speech” or “disinformation,” the European Commission worked to

censor true information and political speech about some of the most important policy debates in

recent history—including the COVID-19 pandemic, mass migration, and transgender issues.

After ten years, the European Commission has established sufficient control of global online

speech to comprehensively suppress narratives that threaten the European Commission’s power.

Prior to the Committee’s subpoenas, these efforts largely occurred in secret. Now, the

European Commission’s efforts have come to light for the first time, informing the Committee

on legislative steps it can take to protect American free speech online.

1 See, e.g., Press Release, H. Comm. on the Judiciary, Chairman Jordan Subpoenas Big Tech for Information on

Foreign Censorship of American Speech (Feb. 26, 2025), https://judiciary.house.gov/media/press-releases/chairman-

jordan-subpoenas-big-tech-information-foreign-censorship-american.

2 Letter from Rep. Jim Jordan, Chairman, H. Comm. on the Judiciary, to Mr. Timothy Cook, CEO, Apple (Feb. 26,

2025) (attaching subpoena); Letter from Rep. Jim Jordan, Chairman, H. Comm. on the Judiciary, to Mr. Andy Jassy,

President and CEO, Amazon (Feb. 26, 2025) (attaching subpoena); Letter from Rep. Jim Jordan, Chairman, H.

Comm. on the Judiciary, to Mr. Satya Nadella, CEO, Microsoft (Feb. 26, 2025) (attaching subpoena); Letter from

Rep. Jim Jordan, Chairman, H. Comm. on the Judiciary, to Mr. Christopher Pavlovski, Chairman and CEO, Rumble

(Feb. 26, 2025) (attaching subpoena); Letter from Rep. Jim Jordan, Chairman, H. Comm. on the Judiciary, to Mr.

Sundar Pichai, CEO, Alphabet (Feb. 26, 2025) (attaching subpoena); Letter from Rep. Jim Jordan, Chairman, H.

Comm. on the Judiciary, to Custodian of Records, TikTok (Feb. 26, 2025) (attaching subpoena); Letter from Rep.

Jim Jordan, Chairman, H. Comm. on the Judiciary, to Ms. Linda Yaccarino, CEO, X (Feb. 26, 2025) (attaching

subpoena); Letter from Rep. Jim Jordan, Chairman, H. Comm. on the Judiciary, to Mr. Mark Zuckerberg, CEO,

Meta (Feb. 26, 2025) (attaching subpoena); Letter from Rep. Jim Jordan, Chairman, H. Comm. on the Judiciary, to

Mr. Steve Huffman, CEO & President, Reddit (Apr. 17, 2025) (attaching subpoena). Letter from Rep. Jim Jordan,

Chairman, H. Comm. on the Judiciary, to Mr. Sam Altman, CEO, OpenAI (Nov. 5, 2025) (attaching subpoena).

3 STAFF OF THE H. COMM . ON THE JUDICIARY, 119 TH CONG., T HE FOREIGN CENSORSHIP T HREAT : H OW THE

E UROPEAN U NION’ S D IGITAL SERVICES A CT COMPELS G LOBAL CENSORSHIP AND I NFRINGES ON A MERICAN FREE

SPEECH (Comm. Print July 25, 2025) (hereinafter “DSA Censorship Report I”).

2

The DSA is the culmination of a decade-long European effort to silence political opposition

and suppress online narratives that criticize the political establishment.

The DSA took effect in 2023, and the European Commission issued the first-ever fine

under the DSA in December 2025 against X. Although the DSA has been in effect for less than

three years, the fine against X represents the culmination of a decade-long effort by the European

Commission to control the global internet in order to suppress disfavored narratives online.

The internet and social media initially promised to be a force that would democratize

speech, and with it, political power. This development threatened the established political order,

and by the mid-2010s, the political establishments in the United States and Europe sought to

counter rising populist movements that questioned deeply unpopular policies such as mass

migration. Recognizing that tackling this problem would take several years, starting in 2015 and

2016, the European Commission began creating various forums in which European regulators

could meet directly with technology platforms to discuss how and what content should be

moderated. Though ostensibly meant to combat “misinformation” and “hate speech,” nonpublic

documents produced to the Committee show that for the last ten years, the European

Commission has directly pressured platforms to censor lawful, political speech in the European

Union and abroad.

3

The EU Internet Forum (EUIF), founded in 2015 by the European Commission’s

Directorate-General for Migration and Home Affairs (DG-Home), was among the first of these

initiatives. By 2023, EUIF published a “handbook . . . for use by tech companies when

moderating” lawful, non-violative speech such as:

- “Populist rhetoric”;

- “Anti-government/anti-EU” content;

- “Anti-elite” content;

- “Political satire”;

- “Anti-migrants and Islamophobic content”;

- “Anti-refugee/immigrant sentiment”;

- “Anti-LGBTIQ . . . content”; and

- “Meme subculture.”4

4 EU Internet Forum: The Handbook of Borderline Content in Relation to Violent Extremism, see Ex. 38.

4

The European Commission also

enforced its censorship goals through

allegedly voluntary “codes of conduct”

on hate speech and disinformation. In

2016, the European Commission

established a “Code of Conduct on

Countering Illegal Hate Speech

Online,” under which platforms

including Facebook, Instagram,

TikTok, and Twitter (now X) promised

to censor vaguely defined “hateful

conduct.”5

A “Code of Practice on

Disinformation,” in which the same

major platforms promised to “dilute the

visibility” of alleged “disinformation,”

followed in 2018.6 In high-level

meetings with platforms, senior

European Commission officials

explicitly told the platforms that the

Hate Speech and Disinformation Codes

were intended to “fill [the] regulatory

gap” until the EU could enact binding

legislation governing platform “content

moderation.”7

At around the same time, the

most powerful EU Member States, such

as Germany, began enacting censorship

legislation at the national level.8

5 The EU Code of Conduct on Countering Illegal Hate Speech Online, E UROPEAN COMM’ N (June 30, 2016),

https://commission.europa.eu/strategy-and-policy/policies/justice-and-fundamental-rights/combatting-

discrimination/racism-and-xenophobia/eu-code-conduct-countering-illegal-hate-speech-online_en.

6 2018 Code of Practice on Disinformation, E UROPEAN COMM ’ N (June 16, 2022), https://digital-

strategy.ec.europa.eu/en/library/2018-code-practice-disinformation.

7 Readout of meeting between TikTok and European Commission Vice President Vera Jourova (Apr. 20, 2021), see

Ex. 55.

8 See Imara McMillan, Enforcement Through the Network: The Network Enforcement Act and Article 10 of the

European Convention on Human Rights, 20 CHIC . J. I NT . L. 252 (2019).

5

Later, in 2022 and right as the DSA was about to take effect, the European Commission

updated the 2018 Disinformation Code. Under the new guidelines, platforms had to participate in

a Disinformation Code “Task Force,” which would meet regularly to discuss platforms’

approach to censoring so-called disinformation.9 The Task Force broke into six “subgroups”

focusing on specific disinformation topics, including fact-checking, elections, and

demonetization of conservative news outlets.10 Across all of these subgroups, there were more

than 90 meetings between platforms, censorious civil society organizations (CSOs), and

European Commission regulators between late 2022 and 2024.11

These meetings were a key forum for European Commission regulators to pressure

platforms to change their content moderation rules and take additional censorship steps. For

example, in over a dozen meetings of the Crisis Response Subgroup, the European Commission

inquired about platforms’ “policy changes” “related to fighting disinformation.”12

9 The 2022 Code of Practice on Disinformation, E UROPEAN COMM’ N (June 16, 2022).

10 See infra Sec. III.F.ii.

11 Id.

12 See, e.g., Agenda: Meeting of the Permanent Task-Force Crisis Response Subgroup (Dec. 14, 2023), see Ex. 196.

6

The “voluntary” and “consensus-driven” European censorship regulatory regime is neither

voluntary nor consensus-driven.

Both before and after the DSA’s enactment, the European Commission established

several forums to engage regularly with platforms about content moderation, including the Hate

Speech Code and the Disinformation Code. These forums, which collectively held more than 100

meetings where regulators had the opportunity to pressure platforms to censor content more

aggressively, were purportedly voluntary and intended to achieve “consensus” through a so-

called regulatory dialogue.13 None of that was true. As internal company emails bluntly reveal,

the companies knew that they “[didn’t] really have a choice” whether to join these voluntary

initiatives.14 And the European regulators were running the show: agendas were set “under

(strong) impetus from the EU Commission” and so-called “consensus” was achieved under

heavy pressure from the European Commission.15

Google staff noted that participation in Disinformation Code subgroup meetings was effectively

mandatory, and that the European Commission retained significant control over the agenda and

group decisions.

13 Internal emails among Google staff (June 22, 2023), see Ex. 2; see infra Sec. III.F.

14 Id.

15 Id.

7

The European Commission successfully pressured major social media platforms to change

their global content moderation rules, directly infringing on American online speech in the

United States.

Most major social media or video sharing platforms are based in the United States16 and

have a single, global set of rules governing what content can or cannot be posted on the site.17

These rules set the boundary for what discourse is allowed in the modern town square, making

them a key pressure point for regulators seeking narrative control to tighten their grip on political

power. Critically, platform content moderation rules are—and effectively must be—global in

scope.18 Country-by-country content moderation is a significant privacy threat, requiring

platforms to know and store each user’s specific location every time he or she logs on.19 In an

age where users can freely use virtual private networks (VPNs) to simulate their location and

protect their personal information, country-by-country content moderation is also ineffective20—

in addition to creating immense costs for platforms of all sizes.21 The internet is global, and

platforms govern themselves accordingly. That means that when European regulators pressure

social media companies to change their content moderation rules, it affects what Americans can

say and see online in the United States. European censorship laws affecting content moderation

rules are therefore a direct threat to U.S. free speech.

Years before the DSA’s enactment, the European Commission made these platform

content moderation rules its primary target. During the COVID-19 pandemic, senior European

Commission officials pressed platforms to change their content moderation rules to globally

censor content questioning established narratives about the virus and the vaccine.22 With the

approval of EU President Ursula von der Leyen and Vice President Vera Jourova, the European

Commission asked platforms how they planned to “update[] . . . [their] terms of service or

content moderation practices (promotion / demotion)” ahead of the rollout of COVID-19

vaccines.23

16 Examples include Facebook, Instagram, YouTube, and X. The notable exception TikTok, is Chinese-owned, but

is transitioning its U.S. operations to majority-American ownership under a deal negotiated by President Trump. See

Clare Duffy, The deal to secure TikTok’s future in the US has finally closed, CNN (Jan. 23, 2026).

17 See, e.g., Community Standards, M ETA, https://transparency.meta.com/policies/community-standards/ (last visited

Jan. 29, 2026); YouTube’s Community Guidelines, Y OUT UBE H ELP,

https://support.google.com/youtube/answer/9288567?hl=en (last visited Jan. 29, 2026); The X Rules, X,

https://help.x.com/en/rules-and-policies/x-rules (last visited Jan. 29, 2026); Community Guidelines, T IK T OK ,

https://www.tiktok.com/community-guidelines/en (last visited Jan. 29, 2026).

18 Id.; see, e.g., YouTube Community Guidelines enforcement, G OOGLE T RANSPARENCY REPORT (last visited Jan. 29,

2026), https://transparencyreport.google.com/youtube-policy/removals (“YouTube’s Community Guidelines are

enforced consistently across the globe, regardless of where the content is uploaded. When content is removed for

violating our guidelines, it is removed globally.”); Community Guidelines, T IK T OK ,

https://www.tiktok.com/support/faq_detail?id=7543604781873371654 (last accessed Jan. 29, 2026) (“Our

Community Guidelines apply to our global community and everything shared on TikTok.”).

19 See Rumble Inc.’s Response to an Order to Produce Records from British Columbia’s Office of Human Rights

(Aug. 31, 2022); Ex. 288 (confirming that some platforms do not currently collect detailed location information of

users).

20 See DSA Censorship Report I, supra note 3, at 31.

21 See, e.g., Trevor Wagener, The High Cost of State-by-State Regulation of Internet Content Moderation,

D ISRUPTIVE COMPETITION PROJECT (Mar. 17, 2021).

22 See e.g., Emails between TikTok staff and European Commission staff (Oct. 30, 2020), see Ex. 48.

23 Id.

8

Pressure to change content moderation rules related to COVID-19 vaccines came from the

highest levels of the European Commission.

9

Throughout the European Commission’s censorship campaign, the countless

Disinformation Code, Hate Speech Code, and EU Internet Forum meetings provided more than

100 opportunities for the European Commission to pressure platforms to modify their content

moderation policies and identify which online narratives on vaccines and other important

political topics should be censored.24 For example, on over a dozen occasions over the course of

just three years, the European Commission used the Disinformation Code Crisis Response

Subgroup meetings to press platforms, such as YouTube and TikTok, on their “new

developments and actions related to fighting disinformation,” specifically referencing “policy

changes.”25

A characteristic agenda for meetings between the European Commission, platforms, and NGOs

where the Commission applied pressure to change content moderation policies.

24 See infra Sec. III.

25 See, e.g., Agenda: Meeting of the Permanent Task-Force Crisis Response Subgroup (Dec. 14, 2023), see Ex. 196

(emphasis added).

10

The pressure on platforms to comply with Europe’s censorship demands only intensified

once the DSA was signed into law in October 2022. The European Commission warned

platforms that they needed to change their global content moderation rules to comply with the

DSA, or else risk fines up to six percent of global revenue and a possible ban from the European

market.26

This decade-long pressure campaign was successful: platforms changed their content

moderation rules and censored speech worldwide in direct response to the DSA and European

Commission pressure. For example, in 2023, TikTok began editing its global Community

Guidelines for the express purpose of “achiev[ing] compliance with the Digital Services Act.”27

TikTok made changes to its global Community Guidelines in order to comply with the DSA.

These new censorship rules went into effect in 2024. In response to the European

Commission’s decade-long censorship campaign, TikTok instituted new rules censoring

“marginalizing speech,” including “coded statements” that “normalize inequitable treatment,”

“misinformation that undermines public trust,” “media presented out of context” and

“misrepresent[ed] authoritative information.”28 These standards are inherently subjective and

easily weaponized against the European Commission’s political opposition. In fact, these internal

documents show that TikTok systematically censored true information around the world to

comply with the European Commission’s censorship demands under the DSA. The document

outlining these changes confirmed that, as “advised by the legal team,” the updates were “mainly

related to compliance with the Digital Services Act (DSA).”29

26 Regulation (EU) 2022/2065 of the European Parliament and of the Council of 19 October 2022 on a Single

Market for Digital Services and Amending Directive 2000/31/EC (Digital Services Act), 2022 O.J. (L 277) Art. 36,

52 (hereinafter “Digital Services Act”).

27 TikTok Community Guidelines Survey, see Ex. 15.

28 TikTok Community Guidelines Update Executive Summary (Mar. 20, 2024), see Ex. 8.

29 Id. (emphasis in original).

11

In response to European Commission pressure, TikTok modified its global Community Guidelines

to censor true information and directly affecting American speech in the United States.

12

Documents indicate that these may not have been the only content moderation changes

instituted in response to the DSA, either. During a presentation to the European Commission in

July 2023, TikTok noted that “units with day-to-day activities overlapping the DSA, like Trust &

Safety . . . [were] given new policies, rules, & [standard operating procedures]” to comply with

the DSA.30 These internal documents suggest that TikTok changed significant portions of its

extensive content moderation systems to comply with the European Commission’s demands.

The European Commission’s focus on global content moderation rules remains: in May

2025, the European Commission explicitly told platforms at a closed-door “DSA Workshop” that

“continuous review of [global] community guidelines” was a best practice for compliance with

the DSA.31

The European Commission’s “best practices” for DSA compliance includes “continuous”

changes to global content moderation rules.

The European Commission is specifically focused on censorship of U.S. content.

Not only did the European Commission harm American speech in the United States by

pressuring platforms to change their global content moderation policies, but it also specifically

sought to censor American content.

This, too, began during the COVID-19 pandemic. In November 2021, the European

Commission requested information about how TikTok planned to “fight disinformation about the

covid 19 vaccination campaign for children starting in the US,” inquiring specifically about

TikTok’s plans to “remove” certain “claims” about the efficacy of the COVID-19 vaccine in

children.32

30 TikTok Slide Deck: Digital Services Act, Readiness overview for the European Commission (July 17, 2023), see

Ex. 3.

31 European Commission – DSA Systemic Risk Assessment Workshop Readout (May 7, 2025), see Ex. 206.

32 Emails between TikTok staff and European Commission staff (Nov. 5, 2021), see Ex. 58.

13

A year later, European Commission regulators pressured platforms to remove an

American documentary film about vaccines, demanding that YouTube, Twitter, and TikTok

“check . . . internally” and respond “in writing” why the film had not been censored.33 YouTube

responded to the European Commission promptly, stating that it “removed” the film in question

after the European Commission raised the issue.34 Put plainly, the European Commission treated

American debates around vaccination as within scope of the European Commission’s regulatory

authority.

European Commission regulators urged TikTok to censor U.S. claims about COVID-19 vaccines

for children.

The European Commission’s focus on American speech was not limited to only COVID-

19-related content, either. Political appointees at the highest levels of the European Commission

pressured TikTok to more aggressively censor U.S. content ahead of the 2024 U.S. presidential

election.

33 Emails between European Commission staff and Code of Practice on Disinformation Signatories (Dec. 8, 2022),

see Ex. 96.

34 Id.

14

Most infamously, then-EU Commissioner

for Internal Market Thierry Breton sent a letter to

X owner Elon Musk in August 2024 ahead of

Musk’s interview with President Donald Trump.35

Breton threatened X with regulatory retaliation

under the DSA for hosting a live interview with

President Trump in the United States, warning that

“spillovers” of U.S. speech into the EU could spur

the Commission to adopt retaliatory “measures”

against X under the DSA.36 Breton threatened that

the European Commission “[would] not hesitate to make full use of [its] toolbox” to silence this

core American political speech.37 In response to Breton’s threats, the Committee sent two letters

outlining how his threats undermined free speech in the United States and constituted election

meddling in the American presidential election.38 Shortly thereafter, Breton resigned.39

Commissioner Breton’s August 2024 letter to Elon Musk warned that the European Commission

would “make full use of [its] toolbox” if X failed to adequately censor Musk’s interview with

President Trump.

The European Commission sought to minimize Breton’s letter to Musk as an unapproved

freelance from a rogue Commissioner acting alone.40 But months before Commissioner Breton’s

35 Letter from Mr. Thierry Breton, Comm’r for Internal Market, European Comm’n, to Mr. Elon Musk, Owner, X

Corp. (Aug. 12, 2024).

36 Id.

37 Id.

38 Letter from Rep. Jim Jordan, Chairman, H. Comm. on the Judiciary, to Mr. Thierry Breton, Comm’r for Internal

Market, European Comm’n (Aug. 15, 2024); Letter from Rep. Jim Jordan, Chairman, H. Comm. on the Judiciary, to

Mr. Thierry Breton, Comm’r for Internal Market, European Comm’n (Sept. 10, 2024).

39 See Lorne Cook, A French Member of the European Commission Resigns and Criticizes President von der Leyen,

AP (Sep. 16, 2024).

40 See Bradford Betz, EU regulator wasn’t cleared to warn Musk against amplifying ‘harmful content’ with Trump X

interview: report, FOX BUSINESS (Aug. 13, 2024).

Former EU Commissioner Thierry Breton

15

letter, other senior European Commission officials were similarly pressing Big Tech executives

for more information on how they planned to moderate election-related speech ahead of the 2024

U.S. presidential election.

In May 2024, European Commission Vice President

Jourova traveled to California to meet with major tech

platforms. During this trip, Jourova met with TikTok CEO

Shou Chew and TikTok’s Head of Trust and Safety to discuss

topics including “election preparations.”41 TikTok sought

confirmation on whether the European Commission Vice

President was traveling all the way to California to have a

meeting that “stay[ed] mostly EU focused,” or whether she

wanted to discuss “both EU and US election preparations.”42 The

European Commission confirmed that Vice President Jourova

wanted to discuss “both.”43

European Commission Vice President Vera Jourova asked to discuss “US election

preparations” with TikTok ahead of the 2024 U.S. presidential election.

41 Emails between TikTok staff and European Commission staff (May 28, 2024), see Ex. 27.

42 Id.

43 Id.

Former EU Vice President

Vera Jourova

16

The European Commission regularly interferes in EU Member State national elections.

The European Commission works to influence EU Member States by controlling political

speech during election periods. Most strikingly, the European Commission issued DSA Election

Guidelines in 2024 requiring platforms to take additional censorship steps ahead of major

European elections, such as: - “Updating and refining policies, practices, and algorithms” to comply with EU

censorship demands; - Complying with “best practices” outlined in the Disinformation Code, the Hate

Speech Code, and EUIF documents; - “Establishing measures to reduce the prominence of disinformation”;

- “Adapt[ing] their terms and conditions . . . to significantly decrease the reach

and impact of generative AI content that depicts disinformation or

misinformation”; - “Label[ing]” posts deemed to be “disinformation” by government-approved, left-

wing fact-checkers; - “Developing and applying inoculation measures that pre-emptively build

resilience against possible and expected disinformation narratives”;44 and - Taking additional steps to stop “gendered disinformation.”45

These DSA Election Guidelines were branded as voluntary best practices.46 But behind

closed doors, the European Commission made clear that the Election Guidelines were obligatory.

Prabhat Agarwal, the head of the Commission’s DSA enforcement unit, described the Guidelines

as a floor for DSA compliance, telling platforms that if they deviated from the best practices,

they would need to “have alternative measures that are equal or better.”47

Moreover, the European Commission’s election censorship mandates likely had

extraterritorial effects. For example, companies disclose in mandatory reports to the European

44 U.S. agencies used this tactic before the 2020 presidential election to cast a true story about Biden family

influence peddling as Russian disinformation. As a result, Big Tech censored the true story in the weeks preceding

the election. See STAFF OF THE H. COMM. ON THE JUDICIARY AND THE SELECT SUBCOMM . ON THE WEAPONIZATION

OF THE FED. G OV’ T OF THE H. COMM . ON THE JUDICIARY, 118 TH CONG., E LECTION I NTERFERENCE : H OW THE FBI

“PREBUNKED” A TRUE STORY A BOUT THE BIDEN FAMILY’ S CORRUPTION IN A DVANCE OF THE 2020 PRESIDENTIAL

E LECTION (Comm. Print Oct. 30, 2024).

45 Commission Guidelines for providers of Very Large Online Platforms and Very Large Online Search Engines on

the mitigation of systemic risks for electoral processes pursuant to Article 35(3) of Regulation (EU) 2022/2065, No.

C/2024/3014 (Apr. 26, 2024) (hereinafter “DSA Election Guidelines”) (emphasis in original).

46 Id.

47 Internal Meta readout of Roundtable on DSA Elections Guidelines (Mar. 1, 2024), see Ex. 243.

17

Commission the company’s standard election-related “policies, tools, and processes.”48 The

European Commission regularly engages with large social media platforms on what election-

related changes should be made, and hosts DSA-related discussions in non-EU countries.49

Since the DSA came into force in 2023, the European Commission has pressured

platforms to censor content ahead of national elections in Slovakia, the Netherlands, France,

Moldova, Romania, and Ireland, in addition to the EU elections in June 2024.50 Nonpublic

documents produced to the Committee pursuant to subpoena demonstrate how the European

Commission regularly pressured platforms ahead of EU Member State national elections in order

to disadvantage conservative or populist political parties.

Nonpublic meeting agendas and readouts show that the European Commission regularly

convened meetings of national-level regulators, left-wing NGOs, and platforms prior to elections

48 See, e.g., id.; Email from Meta to European Commission (July 10, 2024), see Ex.166.

49 See, e.g., Email from Meta to European Commission (July 10, 2024), see Ex.166; Agenda for the 11th Meeting of

the EU Support Hub for International Security and Border Management in Moldova on “Countering Foreign

Information Manipulation and Interference” (Sep. 18, 2024), see Ex. 251.

50 See infra Sec. V.B.iv.

18

to discuss which political opinions should be censored.51 The European Commission also helped

to organize “rapid response systems” where government-approved third parties were empowered

to make priority censorship requests that almost exclusively targeted the ruling party’s

opposition.52 TikTok reported to the European Commission that it censored over 45,000 pieces

of alleged “misinformation,” including clear political speech on topics including “migration,

climate change, security and defence and LGBTQ rights,” ahead of the 2024 EU elections.53

The 2023 Slovak election is one key example. TikTok’s internal content moderation

guides show that TikTok censored the following “hate speech” while facing European censorship

pressure: - “There are only two genders”;

- “Children cannot be trans”;

- “We need to stop the sexualization of young people/children”;

- “I think that LGBTI ideology, gender ideology, transgender ideology are a big

threat to Slovakia, just like corruption”; and - “Targeted misgendering.”54

These statements are not “hate speech”—they are political opinions about a current contentious

scientific and medical issue. TikTok itself noted that some of these political opinions were

“common in the Slovak political discussions.”55 Yet, under pressure from the European

Commission, TikTok censored these claims ahead of Slovakia’s national parliamentary elections.

The European Commission took its most aggressive censorship steps during the 2024

Romanian presidential election. In December 2024, Romania’s Constitutional Court annulled the

results of the first round of the previous month’s presidential election, won by little-known

independent populist candidate Calin Georgescu, after Romanian intelligence services alleged

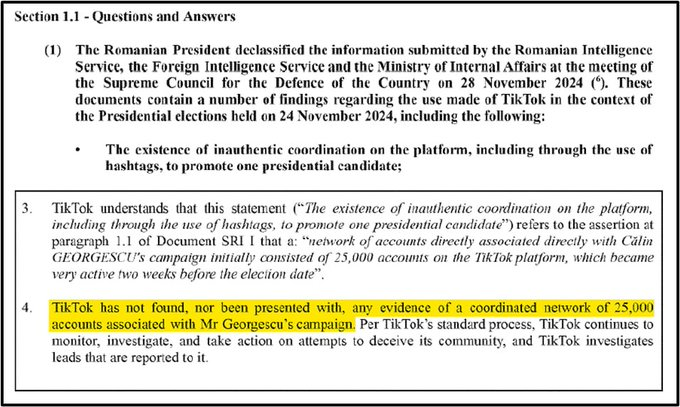

that Russia had covertly supported Georgescu through a coordinated TikTok campaign.56

Internal TikTok documents produced to the Committee seem to undercut this narrative.57 In

submissions to the European Commission, which used the unproven allegation of Russian

interference to investigate TikTok’s content moderation practices, TikTok stated that it “ha[d]

not found, nor been presented with, any evidence of a coordinated network of 25,000 accounts

associated with Mr. Georgescu’s campaign”—the key allegation by the intelligence authorities.58

51 See infra Sec. V.B.

52 Id.

53 TikTok 2024 European Parliament Elections Confidential Report (Sept. 24, 2024), see Ex. 253.

54 TikTok Internal Content Moderation Guidelines for 2023 Slovak Election (Sept. 22, 2023), see Ex. 224.

55 Id.

56 See Thomas Grove & Alan Cullison, Romania Scraps Election After Russian Influence Allegations, WALL . ST . J.

(Dec. 6, 2024).

57 See, e.g., TikTok Response to Commission RFI (Dec. 13, 2024), Ex. 268; TikTok Response to Commission RFI

(Dec. 7, 2024), Ex. 266.

58 TikTok Response to Commission RFI (Dec. 7, 2024), see Ex. 266.

19

By late December 2024, media reports citing evidence from Romania’s tax authority found that

the alleged Russian interference campaign had, in fact, been funded by another Romanian

political party.59 But the election results were never reinstated, and in May 2025, the

establishment-preferred candidate won Romania’s presidency in the rescheduled election.60

TikTok informed the European Commission that it had “not found, nor been presented with, any

evidence” to support Romanian authorities’ key allegation of Russian interference.

The European Commission is continuing to weaponize the DSA to censor content beyond

its borders.

After a decade of censorship, the European Commission continues to abandon Europe’s

historical commitment to free speech.

In December 2025, the European Commission issued its first fine under the Digital

Services Act, targeting X for a litany of ridiculous violations in an obviously pretextual attempt

to penalize the platform for its defense of free speech.61 The European Commission fined X €120

million—slightly below the statutory cap of six percent of global revenue—for alleged violations

59 See Denis Cenusa, Romanian liberals orchestrated Georgescu campaign funding, investigation reveals, BNE

I NTELLINEWS (Dec. 22, 2024).

60 See Sarah Rainsford et al., Liberal mayor Dan beats nationalist in tense race for Romanian presidency, BBC

(May 19, 2025).

61 Commission Decision of 5.12.2025 pursuant to Articles 73(1), 73(3) and 74(1) of Regulation (EU) 2022/2065 of

the European Parliament and of the Council of 19 October 2022 on a Single Market for Digital Services and

amending Directive 2000/31/EC (Digital Services Act); Case DSA.100101, DSA.100102 and DSA.100103 – X

(formerly Twitter), C(2025) 8630 final; see Ex. 302 (hereinafter “X Decision”); see also House Judiciary GOP

(@JudicaryGOP), X (Jan. 28, 2026, 4:09 PM), https://x.com/JudiciaryGOP/status/2016619751183724789.

20

including “misappropriating” the meaning of blue checkmarks by changing how they were

awarded.62

Moreover, despite the European Commission’s protestations that the DSA applies only in

the EU,63 its X decision enforced the DSA in an extraterritorial manner. The decision asserts that

under the DSA’s researcher access provision, X, an American company, must hand over

American data to researchers around the world—all because of a European law.64 And the

European Commission threatened to ban X in the EU if it does not comply with its censorship

demands.65 The European Commission’s decision to fine X is chilling in at least two distinct

ways: it penalizes X for its global defense of free speech, and it claims the authority to enforce

the DSA globally. It is everything the Committee has warned about for well over a year.

The European Commission accused X of violating the DSA by “misappropriating” the meaning

of a blue checkmark.

Two recent EU initiatives also threaten to worsen the European free speech crisis. Under

President von der Leyen’s “Democracy Shield,” the European Commission will create at least

two new censorship hubs for regulators and left-wing NGOs to pressure platforms to censor

conservative content—the European Center for Democratic Resilience and the European

Network of Fact-Checkers.66 Under the same proposal, the European Commission is seeking to

expand the Disinformation Code to include requirements related to “user verification tools,”

which could effectively end anonymity on the internet by requiring users to show identification

in order to create an account.67 The Commission is also seeking to circumvent normal

democratic processes to create a single, expansive definition of illegal “hate speech” across

Europe.68 This would require every EU member state to adopt the Commission’s definition,

which includes conventional political discourse and “memes.”69 The European censorship threat

shows no signs of abating.

62 Id.

63 See Letter from Thierry Breton, Comm’r for Internal Market, European Comm’n, to Rep. Jim Jordan, Chairman,

H. Comm. on the Judiciary (Aug. 21, 2024).

64 X Decision, supra note 61.

65 Id.

66 Joint Communication to the European Parliament, the Council, the European Economic and Social Committee and

the Committee of the Regions: European Democracy Shield: Empowering Strong and Resilient Democracies,

JOIN(2025) 791 final (hereinafter “Democracy Shield Proposal”).

67 Id. Governments could then compel platforms to produce this information in order to target anonymous speakers

with which it disagrees.

68 Union of Equality: LGBTIQ+ Equality Strategy 2026-2030, E UROPEAN COMM’ N, COM(2025) 725 final at 6.

69 DSA Censorship Report I, supra note 3, at 28.

21

The Committee is conducting its investigation into foreign censorship laws, regulations,

and judicial orders because of the risk they pose to American speech in the United States. The

EU’s DSA, in particular, represents a grave danger to American freedom of speech online: the

European Commission has intentionally pressured technology companies to change their global

content moderation policies, and deliberately targeted American speech and elections. The

European Commission’s extraterritorial actions directly infringe on American sovereignty. The

Committee will continue to develop legislative solutions to defend against and effectively

counter this existential risk to Americans’ most cherished right.

22

TABLE OF C ONTENTS

EXECUTIVE S UMMARY ……………………………………………………………………………………………………… 1

TABLE OF C ONTENTS ……………………………………………………………………………………………………… 22